In Training Volume and Muscle Growth: Part 1 I looked at four different studies, one of which I threw out for having what I consider absolutely absurd, reality failing results. Continuing from there I want to look at three more studies (published as of October 15th, 2018 that was written) to complete the set. This will all lead into the final part 3 where I’ll look at the results in overview to see if any general conclusions can be drawn regarding the questions I originally posed.

Table of Contents

Effects of Graded Whey Supplementation during Extreme-Volume Resistance Training

The next paper is by Haun et al. and was published in Frontiers in Nutrition in 2018. It is notable for having been (at least partially) funded by Renassiance Periodization and having Mike Israetel as one of the authors. I do NOT mention this to dismiss it out of hand on a “Who funded it?” kind of way because that’s crap. The research stands on its own merits or it doesn’t.

Rather, I am mentioning it since it was clearly an attempt to support/test Mike’s ideas about volume, MRV, etc. Of some trivial interest, it literally came out like 3 days before Brad’s. Which is a shame because had it come out last year, it would have brought up a critically important issue that will have to be considered going forwards on this topic. More below.

As you’ll see, it also had a semi-negative outcome in terms of NOT supporting what I suspect Mike was trying to prove to begin with. Despite this. I am also told that Mike has adjusted his workout templates volumes down in response to the results of this paper..

That’s the mark of intellectual honesty since I’m 100% sure they wanted the opposite result of what they got. Or Mike did anyhow. Not only did they publish essentially a negative finding but Mike adjusted his recommendations (mind you, it would have been faster to have just listened to me in the first place but no matter).

Goals of the Paper

The paper actually had a couple of different goals. One was to examine the response of muscle growth to progressively increasing volumes. Basically to see what happened as volume went up weekly. This is where I will put my focus as hypertrophy has been my criterion endpoint from the outset.

But a second goal was to see if increasing protein intake along with increasing volume had any additional benefit (the title makes it sound like this was the primary goal and it might be. Whatever, there were two goals). The idea being that getting optimal growth from increasing volumes require more protein or whatever.

Dietarily, the groups were either supplemented with maltodextrin, a single serving of whey or given graded doses of whey protein (from 25-150 grams/day). But they all did the same training program. Since the dietary manipulation ended up having zero effect on the results, I won’t mention it again. The researchers didn’t.

Training Volume

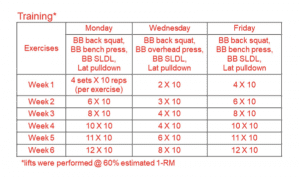

In it 34 subjects were recruited with 3 dropouts resulting in 31 total subjects. The participants were resistance-trained with at least 1 year of self-reported resistance training experience and a back squat 1RM of greater or equal to 1.5 times body weight. The subjects performed the following workout with barbell back squat, barbell bench press, barbell SLDL and lat pulldown at every workout. So one compound movement per muscle group(s).

The sets were done at 60% of maximum and you can see how training volumes increased weekly from 10, 15, 20, 24, 28 and finally 32 sets/week. Yes, 32 sets per week of squats. Even at 60% of max, well…yeesh. That makes GVT look sane by comparison. That said…

It’s a little bit tough to tell from the methods how the workout was performed but it almost looks like one set of each movement was done before moving to the next, the next and the next and then going back to the first exercise. As 2′ were given between each exercise unless the subject wanted to go sooner or needed a bit longer, this is like 10 minutes between sets of any individual exercise. That is assuming I’m reading this right.

Exercises were completed one set at a time, in the following order during each training session: Days 1 and 3—barbell (BB) back squat, BB bench press, BB SLDL, and an underhand grip cable machine pulldown exercise designed to target the elbow flexors and latissimus dorsi muscles (Lat Pulldown); Day 2— BB back squat, BB overhead (OH) press, BB SLDL, and Lat Pulldown.

A single set of one exercise was completed, followed by a set of each of the succeeding exercises before starting back at the first exercise of the session (e.g., compound sets or rounds). Participants were recommended to take 2min of rest between each exercise of the compound set. Additionally, participants were recommended to take 2 min of rest between each compound set.

However, if participants felt prepared to execute exercises with appropriate technique under investigator supervision they were allowed to proceed to the next exercise without 2 min of rest. Additionally, if participants desired slightly longer than 2 min of rest, this was allowed with intention for the participant to execute the programmed training volume in <2 h each training session.

Subjects reported their Reps in Reserve (RIR) for each set which just means how many more reps they think they could have done. So an RIR of 3 on a set of 10 means they think they could have done 13 (and this method is fairly accurate for trained folks).

The average RIR started at roughly 3.7+-1 and this went up slightly to 4.3+-1.6 by week 6. So on their sets of 10, they could have done 13-14 reps Basically, it was all pretty submaximal as would be expected for sets of 10 at 60% with an almost 10 minute rest interval. Ten reps is usually ~75% and with a 10 minute rest there simply isn’t any accumulated fatigue occurring.

Body Composition Measurement

Body composition changes were measured by DEXA (which is at best rough for estimating true muscular change) but muscle thickness via Ultrasound was also measured for the vastus lateralis (VL) and biceps.

Biopsies (where a chunk of muscle is literally cut out of it) of the vastus lateralis were also taken to determine the physiological cross sectional area of actual muscle fibers. While way more invasive (with its own limitations), biopsy is arguably a far more direct method of assessing fiber size changes since you are literally looking at the muscle fiber area directly.

Of some interest, total body water (TBW) and extra cellular water (ECW) were measured via BIA (it’s only real use) as this is representative of inflammation and edema (basically, the body retains water when inflammation is present) and this will be important in a moment. Measurements of mood (POMS, profile of mood state) and muscle tenderness were also measured, basically to check for overtraining and inflammation although I won’t focus on this. This was a thoroughly done study to be sure.

Measurements were made before week 1, at week 3 and again at week 6. And here is where it gets interesting since the results ended up being pretty different from Weeks 1-3 and Weeks 4-6 as the volumes got stupid.

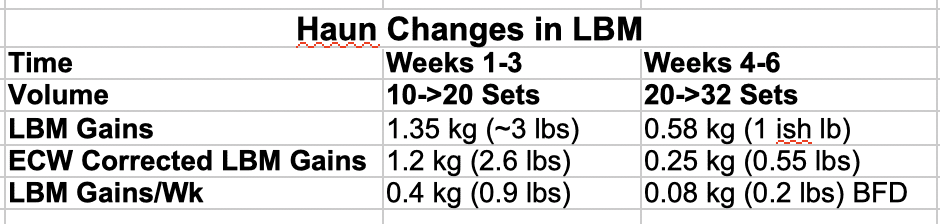

LBM Changes

First let me look at the lean body mass changes. From week 1 to week 3 (10->20 sets/week), the subjects gained 1.35 kg/3ish pounds with that value dropping to 0.85 kg/2ish pounds from week 3 to week 6 (20->32 sets/week). So already there is a reduction in training gains with more volume producing far less gains. Still size is size and 2 lbs in 3 weeks is still good, right? Hang on.

The study did something I haven’t seen before which was to correct the change in LBM for extracellular water (ECW) which will show up as LBM. Basically swelling and edema (not the same as sarcoplasmic hypertrophy) that can occur.

And when this correction was made, the LBM changes dropped from 1.3 kg/3 ish pounds to 1.18 kg/2.6 lbs from week 1 to week 3 which was about the same as before and from 0.8 kg (2 ish pounds) to a statistically insignificant 0.25 kg/0.55 lbs from week 4 to week 6. So they gained about 0.9 lb/week for the first 3 weeks and this dropped to an insignificant 0.2 lbs/week from 3 to 6 when the volume got stupid.

Basically the increased LBM in the last three weeks of the study was just fluid accumulation (perhaps lending credence to the idea of pump “growth” occurring, just not by the previously thought mechanism).

I’ve shown this data below.

In the abstract (which most in the industry don’t read past) the authors try to spin this as the higher volumes still being superior but in the discussion itself they state:

Thus, when considering uncorrected DXA LBM changes, one interpretation of these data is that participants did not experience a hypertrophy threshold to increasing volumes up to 32 sets per week.

However, if accounting for ECW changes during RT does indeed better reflect changes in functional muscle mass, then it is apparent participants were approaching a maximal adaptable volume at ~20 sets per exercise per week.

Note their use of the word “if” after however in the last sentence. They are kind of hedging their bets here but it is NOT yet clear if you do or do not have to correct for ECW when measuring changes in muscle thickness via Ultrasound. It’s hard not to see how it would given how Ultrasound works but that may reflect my own bias on the matter.

In their discussion they do state:

In this regard, Yamada et al. (24) suggest expansions of ECW may be representative of edema or inflammation and can mask true alterations in functional skeletal muscle mass. Further, these authors suggest the measurements of fluid compartmentalization (e.g., ICW, ECW), which are not measured by DXA, are needed if accurate representation of functional changes in LBM are to be inferred.

Suggesting that ECW can skew Ultrasound measurements and must be both measured and accounted for to get any idea about actual changes in skeletal muscle size or amount. More importantly, this has to be directly EXAMINED before we go forwards with any more high volume studies. I’ll harp on this but we must KNOW if the increase in ECW/edema impacts on measurements or not.

Regardless, a cap was seen where anything over 20 sets had no further advantage in total LBM gains. But as I’ve said, LBM gains themselves aren’t necessarily indicative of actual muscular growth since it can represent a lot of things (perhaps the increased LBM even in weeks 1-3 was glycogen storage, since it was not measured, we can’t know).

Muscle Hypertrophy

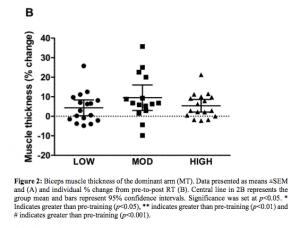

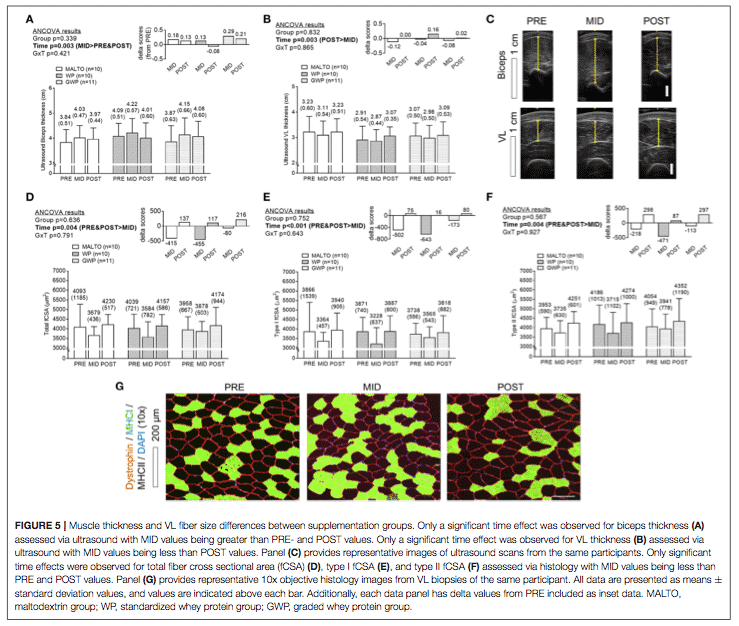

So let’s look at the changes in thickness via Ultrasound. As with Radaelli I’m not providing specific numbers because the raw data wasn’t presented, the graphics in the paper are tiny and my eyes hurt trying to read them. So I can’t even begin to try to extrapolate it out (and if I did I’d be subject to my bias guessing at the numbers which I won’t do). But here it is.

The verbiage in the results is even more obscure in terms of what happened. The best I can do is quote from the legend on Figure 5 above.

Muscle thickness and VL fiber size differences between supplementation groups. Only a significant time effect was observed for biceps thickness (A) assessed via ultrasound with MID values being greater than PRE- and POST values.

Only a significant time effect was observed for VL thickness (B) assessed via ultrasound with MID values being less than POST values. Panel (C) provides representative images of ultrasound scans from the same participants. Only significant time effects were observed for total fiber cross sectional area (fCSA) (D), type I fCSA (E), and type II fCSA (F) assessed via histology with MID values being less than PRE and POST values.

Panel (G) provides representative 10x objective histology images from VL biopsies of the same participant. All data are presented as means ± standard deviation values, and values are indicated above each bar. Additionally, each data panel has delta values from PRE included as inset data. MALTO, maltodextrin group; WP, standardized whey protein group; GWP, graded whey protein group.

This is really hard to parse. By Ultrasound, biceps thickness was bigger at week 3 than at the beginning or the end of the study, possibly suggesting that it increased in size and then decreased again. For quads it’s even weirder. By the Ultrasound the Week 6 size was greater than the Week 3 size but, statistically neither seems to have been different than the starting value. So while there does appear to be growth from Week 3 to 6, there was no net size gain from Week 1 to 6.

As I’ll discuss below, the biopsy data showed shrinkage from Week 1 to 3 before regrowth to Week 6 and it’s possible that the Ultrasound simply didn’t pick the same pattern up statistically. Perhaps the larger point is that there was no net growth from Week 1 to 6. That’s a lot of squatting to achieve nothing.

Mind you, overall, the actual total change in muscle thickness via Ultrasound appear to have been absolutely tiny to begin with. They state:

When summing biceps and VL thicknesses at each level of time, there were no significant differences between groups at each level of time. However, a significant main effect of time revealed that the summed values of thickness measurements were significantly higher at POST compared to PRE (p = 0.049). The summed value at POST was 7.16 ± 0.77 cm where the summed value was 6.98 ± 0.81 cm at PRE (data not shown).

What they actually did here was add up the total growth for the biceps and VL to make the number larger (and presumably reach statistical significance). And looked at that way, it was a bit higher at 6 weeks than at the beginning of the study. But look at that change, the summed total of both muscles was all of 0.18 cm (7.16 – 6.98) or 1.8 mm TOTAL (if this was split evenly and I don’t know if it was, that’s less than 1 mm growth per muscle).

And the only way they got this to be significant was by adding up two different muscles (a scientific approach called playing silly buggers). It would be like a training study where the subjects improved their bench by 15 lbs and squat by 25 lbs and neither were meaningful but if you ADD THEM UP, suddenly the total 40 lb improvement from the training program is significant. Feh.

The Biopsy Data

Looking at the quad biopsy data, quad size actually went down from Week 1 to 3 (visible in D/E/F in the figure above) before returning to the starting size by week 6. This backs up the tentative conclusion on the Ultrasound above. Basically at the lower volumes, quads shrank before increasing to their starting size at the end of the study. If they hadn’t measured at week 3, they would have seen zero change from start to finish.

This is perhaps the more interesting finding, that there is a possibly discrepancy in growth requirements for upper and lower body. For the upper body, biceps size went up from week 1 to 3 before going back down but ended up right where it started: a lot of work to achieve nothing.

Not only was more volume not better, it was worse. In contrast, quads (by biopsy anyhow) shrank from Weeks 1 to 3 before growing from Week 4 to 6. This suggests that the lower volumes early on were insufficient but doesn’t change the fact that, by biopsy, there was no overall muscle growth from Week 1 to 6. The subjects did a LOT of squatting to make zero gains.

This does suggest, as I’ve noted already, that upper and lower body might have different optimal volume requirements. Perhaps if the quad volume had started at a higher level, there would not have been size loss from Weeks 1 to 3 or net size gain from Weeks 1 to 6. Perhaps if upper body volume had been capped at 20 sets, there would have been no shrinkage or loss of size or even a further increase. Perhaps perhaps perhaps. Since they didn’t do it, we can’t know.

This is all still colored by the fact that any total growth of any sort was absolutely tiny. When you have to add both values together to get it to be significant, that’s pretty damn telling. This is what the Ostrowski study got in triceps alone with 14 sets/week.

Mind you, this study was a mere 6 weeks which is seriously short. Perhaps we should consider that significant growth in that time period. Perhaps we shouldn’t. Perhaps over a longer study, perhaps with slower volume increases, different results would have been seen. Perhaps, perhaps, perhaps. This is what they did and this is the data they have. In this vein they state

Finally, while a 6-week RT program seems rather abbreviated, we chose to implement this duration due to the concern a priori that the implemented volume would lead to injuries past 6 weeks of training.

Which is a consideration a lot of people seem to be missing in the current volume wars. Even IF super high volumes generate better growth, what happens when they are followed over extended periods? I’ll tell you what happens: overuse and other injuries, overtraining, burnout, etc, none of which are beneficial for long-term progress. It’s just something nobody seems to be talking about outside of a few folks on my Facebook group.

Simply, even IF massive volumes generate better short-term growth (and the overall indication is that they do not), if they get you hurt that’s not a good thing. Long-term progress is the goal here and that usually means a more moderate approach over time. Put differently, it’s better do do a series of training cycles with 15 sets/week that gets some growth than doing one with 30 sets/week that keeps you out of the gym for 3 months with tendinitis.

Was the Growth Sarcoplasmic?

Addendum: After the above paper was published, Haun et al. re-analyzed some of the data. In that re-analysis they found that the growth was primarily “sarcoplasmic”, meaning that it was in the non-contractile part of the muscle.

A comment on Brad Schoenfeld’s Study

Since it will likely be used to rebut the above, let me note that Brad Schoenfeld’s study, discussed next, used high volumes over 8 weeks and saw no dropouts due to injury. Well, 8 vs. 6 weeks it not much of a difference and it’s when absurd levels of training are followed for months at a time (as most tend to do) that they get into trouble and either overtrain or get injured.

What I’m saying is that I want you to do the workout for 3-4 months and then get back to me. As well, the nature of the workout in Brad’s study had some real implications for training poundages that were used. It’s one thing to do all the volume when the overall loading is low and another to do it when it’s heavy. Only the latter wears people out. Performing endless warm-up sets as in Haun et al or a bunch of submaximal “to form failure” sets doesn’t. But it’s also an inefficient way to train.

Back to Extracellular Water

Ignoring that for the time being, there is another issue that has to be addressed which is the ECW issue I mentioned above. IF increased ECW is throwing off the Ultrasound, then it is only impacting on high volumes of training and it MUST be accounted for. But first it has to be determined if it impacts anything.

Do a pilot study, do something, but figure this out before another of these damn studies is done. All you have to do is measure muscle thickness when there is no increase in ECW, then do something (like 32 sets of squats) to increase ECW and re-measure it when true growth could not have occurred. BIA can clearly pick up ECW so this shouldn’t be terribly difficult to do methodologically.

Either muscle thickness does or does not change in response to it. If it doesn’t, there’s no problem. If it does, there is a HUGE problem where high volume studies are now measuring changes in ECW rather than actual growth. Or, at the very least, ECW changes are causing an artificial elevation of the Ultrasound BUT ONLY IN THE HIGH VOLUME GROUPS which would make it look like they are generating MORE growth than they actually are. But ONLY in the high volume groups.

Is Volume the Primary Driver of Growth?

A second issue I want to discuss. A current theme in the training world is that “volume is the primary driver on hypertrophy” and this study was clearly designed to test that. Except that what it really did was disprove the idea entirely.

Keep in mind that the intensity of training was 60% of maximum with stupid long rest periods and the subjects reported 3-4 reps in reserve during the study. So it was pretty submaximal for endless sets of 10 with little to no cumulative fatigue (even Poliquin’s original GVT was 10X10@60% on 1 minute and it got HARD by set 10 as fatigue set in).

And despite throwing all the volume at folks, growth was, factually, sucky, a mere 1.8 mm which they only achieved by adding up biceps and VL to begin with. Basically, with insufficient TENSION overload, all the volume in the world doesn’t generate meaningful growth.

It does cause lots of water retention and maybe this does lend some credence to the whole idea of pump growth, it just happens to be increased ECW rather than sarcoplasmic growth. So pump it up with light weights and you look bigger (maybe, for a little bit) and weigh more. But it’s just fluid accumulation. Or you could lift real weights for less volume and actually grow better.

And all of the above is true because TENSION is the primary driver on hypertrophy and this study shows it in spades (in his apologist article, Eric even states this very thing the same citing the same study I’ve been referring people to for almost 20 years). Ostrowski got much more growth in the triceps (2 mm) with only 14 sets by using heavy loads (admittedly over a longer time period).

So did the two GVT studies (which at least had some heavy work, 70% for sets of 10). A relatively small study by Mangine showed the same (when I mentioned this study to Brad he hand-waved it away for reasons I forget) where much lower volumes at a higher intensity generated more growth and strength than higher volumes at a lower intensity.

Tension/progressive tension overload trumps volume every time.

Progressive Tension Overload Always Wins

It’s really that simple: tension/progressive muscular tension overload beats volume every time. Yes, volume plays a role but, in the absence of sufficient tension, it doesn’t mean shit. That means that volume can’t be the primary driver because that’s not what primary means. If this is unclear consider the following:

- Insufficient intensity + high volumes = dick for growth (this study)

- Sufficient intensity + low volumes = growth (all other studies)

- Sufficient intensity + higher volumes SOMETIMES = more growth (all other studies)

What’s the common factor in the two situations that generate growth? It’s tension, NOT volume. That makes tension overload primary as without it, growth is effectively nil. Volume is purely secondary to sufficient tension overload and there’s no escaping that fact. Yes, more volume AT A SUFFICIENT INTENSITY may yield more growth. But ALL the volume at submaximal intensities accomplishes jack squat.

Since I imagine some will use it to rebut the above, I should address the low-load training studies (usually using 30% 1RM to FAILURE) to generate growth. This is true but note my capitalized word. By taking light loads to failure, the muscle fibers ARE exposed to high tension loads at the end of the set.

This is due to fatigue occurring and requiring the high threshold fibers to be recruited to keep the weight moving until FAILURE occurs. And this is distinctly different than doing 10 non-fatiguing sets (remember RIR never went below 3-4) at 60% with 10 minutes rest.

The latter simply never exposes muscle fibers to a high-tension overload. And clearly doesn’t work very well (if at all). Even if it did, why bother with 20 light sets when, most likely, 8-10 heavy sets would be more effective?

Summary

As their own conclusion in the discussion (not the abstract) shows, the growth response seemed to hit a top-end cap of 20 sets with more sets generating insignificant gains in LBM but rather increased water storage due to edema/inflammation.

It suggest a difference in optimal volume levels for upper and lower body as well with the upper body seemingly responding better to lower volumes and the legs actually getting smaller with the same lower volumes (before returning to their initial size as volume went up).

But this has to be considered with the fact that the overall growth was miniscule, only being even remotely significant with the silly buggers method of adding biceps and VL growth together. Admittedly, this was short study using, insufficient loads (also demonstrating clearly that volume is NOT the primary driver on growth because volume didn’t drive jack shit for growth here).

Perhaps the most interesting point is that water retention may have to be accounted for going forwards since it can clearly skew the results and make it look like more growth is occurring than actually is. And since it looks like higher volume causes more water retention than lower, well….this has HUGE implications for a lot of volume studies.

Because if water retention is ONLY an issue beyond 20 sets/week, then it means that any study comparing volumes below and above this level have a confound that must be taken into account for the high volume groups (but NOT the lower).

That is, if you compare 12 sets to 24, the 24 set group MUST be adjusted for ECW but the 12 set group doesn’t have to be. And IF that ECW is shown to impact on Ultrasound measurements, this means that any “apparent” benefit of the highest volumes might just be a measurement artifact of increased ECW.

Note my use of the word might. We don’t know if ECW colors Ultrasound or not. But it MUST be studied before another one of these studies is done.

Bringing me to Brad Schoenfeld’s study.

Resistance Training Volume Enhances Muscle Hypertrophy but not Strength in Trained Men

This is the paper by Brad Schoenfeld et. al. that has been a driver on all of the recent Internet drama. It’s been discussed to death and I’ll try to keep this short (and probably fail). It took 45 college aged men with at least 1 year of training experience (the single reported value was like 4 years +-3.9 or something). Of those initial 45, 11 dropped out (not due to the workouts) leaving 34 total subjects, leaving the study statistically underpowered.

Training Volume

They did the following workout flat barbell bench press, barbell military press, wide grip lat pulldown, seated cable row, back squat, leg press and one-legged leg extension and this was done three times weekly for either 1, 3 or 5 sets per exercise.

For the upper body, this meant that the volumes ranged from 6 to 18 to 30 sets and for lower it was 9, 27 or 45 sets per week. Sets were 8-12RM (to concentric/form failure supposedly) on a 90 second rest interval. Which is really already failing the reality check.

Reality Checking This Workout

Let me ask:

- How many people have you ever seen squat to failure voluntarily? By this I mean continue lifting until they fail mid-rep and either need a spotter to get them to the top or lower the bar to safety pins or dump it?

- How many could do 5 sets of squats to failure with 90 seconds rest with any decent poundage?

The answers will likely be

- Almost nobody. I’ve done it, I’ve had the occasional trainee do it. I’ve seen almost none do it on purpose. If failure occurs on squats, it’s usually because the person is a macho dipshit and something goes desperately wrong mid-set (or it’s a powerlifter missing a heavy max). But few, if anybody, do it on purpose.

- Literally nobody. Well certainly no men. Due to differences in fatigue, women would be far more likely to survive this. But the study only used men so that doesn’t matter. Who would never survive it. A true set of 12RM and you have to lay down for a few minutes. 5 sets of 12RM on 90 seconds? Maybe with 95 lbs on the bar and then the first set wouldn’t be to failure. What you’d probably see is a decent first set and the poundages or reps dropping excruciatingly with every set. This would be the definition of junk volume and anything less than 2-3′ rest between heavy sets of squats anywhere near limits would be a minimum. I’d love to see what poundages were actually used in the high-set workouts because I bet they started ok and ended up as jack shit.

Which once again raises immediate questions about the study in the sense that the high-volume workouts were basically impossible to complete but no matter.

Where are the Body Composition Measurements?

Body composition was, shockingly, not measured (even post-training weight wasn’t reported which is a bizarre oversight) especially given that the head researcher apparently likes to crow about his super amazing Inbody body comp device in his lab.

Sure, BIA is shit but if you have this amazing gizmo, why not use it? Guess they couldn’t find the time with all the not pre-registering, doing the unblinded Ultrasounds, along with figuring out how to misrepresent the Ostrowski data to change its actual conclusion to match theirs.

It’s simply an insane and unacceptable oversight.

Biceps, Triceps and Rectus Femoris Hypertrophy

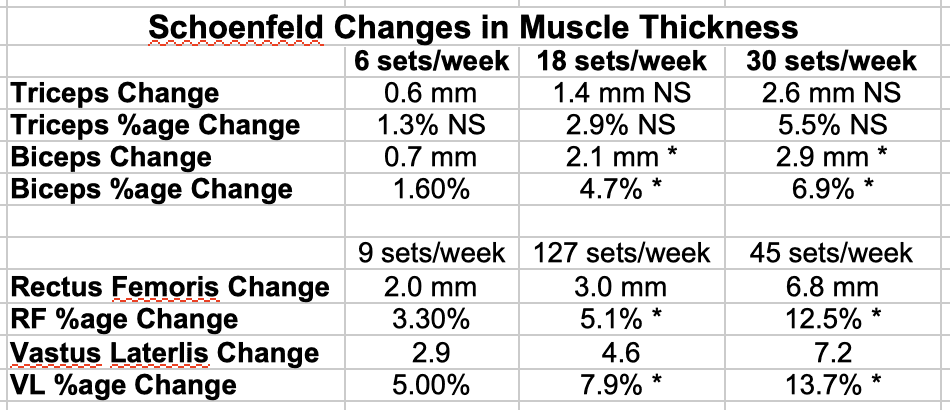

However, muscle thickness for triceps, biceps, vastus lateralis and rectus femoris was done by Ultrasound. I’ve presented the data for each muscle group in the chart below. All that is presented is the mm change for each muscle and the %age change this represents (I did this by dividing the average change by the average starting value in the study).

I’ve indicated sets as sets/week for upper and lower body. I’ve indicated, using THEIR statistical methods which groups were different from which group. An asterisk means it is different from a non-asterisk but equal to any other value with an asterisk. NS means non-significant statistically.

Ok, let’s look at the results.

Triceps

So despite a numerical change, triceps showed no significant differences between groups even if the absolute values were kind of different. 1.4 is over double 0.6 and 2.6 is nearly double that. But it was non-significant in a statistical sense. But this is the beauty of statistics where an apparent real-world change can be statistically irrelevant but an irrelevant real-world change can be significant. Thing is, if you choose your statistical limits and the study doesn’t reach it, that’s it.

Biceps

For biceps, the 18 and 30 set/week group were not statistically different from one another but both were higher than the 6-set group (and somehow smaller absolute changes in this case were significant). This is likely due to it being statistically underpowered and I guess there is a small trend for 30 sets to be superior but that’s an enormous increase in volume and training time to get a relatively smaller increase (15 more sets/week for 0.8 mm).

Basically, the percentage change kind of hides the fact that going from 6 to 18 sets got 300% more growth and adding another 12 sets got only 40% more. Even if the higher volumes are superior, it’s a terrible return on investment.

Rectus Femoris and Vastus Lateralis

The same is true for the RF and VL changes. Both the 27 and 45 set groups were better than the 9 set group but were NOT statistically different from one another. But there is a visible trend present. Clearly 12.5% is more than double 5.1% and 13.7% is a little less than double 7.9%. Like in Ostrowski, the visible trend simply didn’t show up as a statistical difference.

Let me note that the above is true for all statistical methods applied, there was NO statistical difference between the moderate and high volume groups although both were better than the lowest volume groups. This actually makes sense given the general improvement in growth up to 10+ sets.

But there is also the issue of the spread of sets. From 6 to 18 sets up to 30 is big although 9 to 27 to 45 is enormous. What happens in-between those values? We don’t know.

Bayesian Statistics

Now when the P-value statistics failed to show a benefit, James Krieger invoked something called Bayesian statistics. You can think this as representing the probability that one hypothesis or the other is true. So you look at a value called BF10 or BF01, which are simply the inverse of one another.

And in Bayesian statistics, there are fairly standard interpretations of what the values mean in terms of the support for a given hypothesis with larger values meaning more support. And until you get up to about 20 (depending on the source), it doesn’t mean much.

Here the Bf values were like 1.2 for the 3 set and 2.4 for the 5 set group and this was claimed as double the probability of a real difference for the higher volume group to be superior by James Krieger over and over, usually to deflect my direct questions. But in Bayesian statistics numbers that low are called a weak effect (as Eric honestly reported even if he still concluded it meant something).

As a friend with much more experience in statistics explained.

Know what another way to say “weak” effect is? “Not worth more than a bare mention” (that comes from the Jeffries (1961) reference that the Raftery (1995) paper [cite 20] refers to.

Cite 20 was in Brad’s paper and Jeffries is probably one of those ancient statistics papers. Basically they reference a paper on Bayesian statistics that says that those Bf values below 3 are barely worth a mention (until they get to like 100+ they just don’t mean anything).

Yet Brad and James et. al. used it to draw their conclusion and James continually repeated it in lieu of answering direct questions. I believe his response to me was “in Lyle’s world a doubling of the chance of rain isn’t important.” Not when the doubling is from 0.1% to 0.2% it’s not, James. Is it in yours?

Because the simple fact is that double jack shit is still jack shit. And the BF10 values means that the support meant jack shit. It was a cute deflection by James but that’s all it was.

Basically, the paper was really struggling to make the highest volume group look better than the moderate volume group and I’ll link out to the extremely detailed statistical analysis my friend did on the topic for anybody interested in this.

Basically none of the statistical metrics used support that the high volume group was better than the moderate volume group although I will still acknowledge that there was a trend, just as with Ostrowski (it would be disingenuous for me to acknowledge it for one paper but not this one).

My Other Issues

I’ve brought up my other issues with this study endlessly and said at the outset that I wasn’t going to harp on methodological issues one way or another for any paper and it would be unfair of me to do it for this one only.

If Eric thinks that the argument “Well other papers are methodologically flawed so it’s ok for this one to be” is correct, I’ll concede the point and apply that rule. This makes all studies fair game by his own argument (recall that I dismissed Raedelli on the totally nonsensical results, not the methods).

He opened the door, I get to walk through it. Eric, you played yourself.

One oddity is that, Eric’s and Jame’s attempt to dredge up nonsense about leg extension volume load notwithstanding (data once again NOT reported in the paper and ONLY brought up when they were desperate to maintain the bullshit), the strength gains in all groups were identical over the length of the study.

This fails the reality check so hard it hurts. It contradicts literally every past study on the topic showing that strength gains increase with increasing volume (at least up to a point). Several suggestions were made from the length of the study to the repetition ranges that were used. But it still fails the reality check.

However, in that muscle size and strength show a strong (but imperfect) relationship, the lack of strength gains differences implies something else: a lack of differences in muscular size gains. Same size gains, same strength gains. QED. Note my use of the word IMPLIES. But there is a big issue when this paper runs basically opposite to every other one. One argument I’ve seen is that the short rest periods prevented the weights from being heavy enough. Well, that’s true.

Brad himself has done a paper showing that longer rest intervals beat out shorter. So why design the study like this to begin with? Brad says it was for practical reasons, to keep the workout about an hour. Which raises another practical issue about insane volumes: with a real rest interval, workouts with this much volume are impossibly long (I believe the 32 set workouts in Haun were about 2 hours).

But it still fails the reality check and contradicts all other literature on the topic. Brad has since spun this to the NY Times as “You can make strength gains with 13 minute workouts”. Which is probably true for beginners but totally absurd in anybody beyond that level.

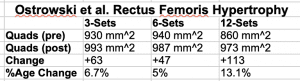

Now, acknowledging that there is a trend (albeit one that could not even be forced into being statistically significant despite throwing multiple statistical methods at it), let’s go back to the Ostrowski data since that is what Brad compared his results to in his paper’s discussion in terms of muscle growth. I’ve reproduced it from Part 1.

In doing so, Brad represented the leg data as 6.7% for the low volume group and 13% is for the high (3 and 12 sets respectively) stating that this is similar to his own data. This is accurate but incomplete and you have to wonder why he left out the middle data point.

Was it to save ink (on a PDF and yes I know that the article is also printed, spare me), was he running out of words? The journal doesn’t have a word count limit on the manuscript (it says on average 20 pages) even if he claimed that it does.

So that’s not it.. It’s not even as if adding that information would have added more than like 3-4 words. Allow me to demonstrate:

Brad wrote this:

“Ostrowski et al. (11) showed an increase of 6.8% in quadriceps MT for the lowest volume condition (3 sets per muscle/week) while growth in the highest volume condition (12 sets per muscle/week) was 13.1%”

36 words

I can rewrite this as:

Ostrowski et. al. (11) showed a dose-response for growth in the quadriceps of 6.8%, 5% and 13.1% for 3, 6 and 12 sets/week respectively

26 words.

Perhaps Brad should hire me to help him with the writing of his papers. I saved him 12 words and accurately represented the data. But he didn’t want that, clearly.

Schoenfeld vs. Ostrowski

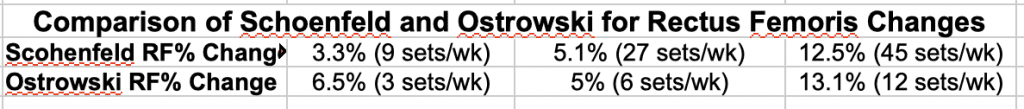

But let’s look at this side by side. Below I’ve presented Ostrowski’s data for his 3,6 and 12 set groups and Brad’s for his 9, 27 and 45 set groups. I can only compare percentages here since the Ostrowski data was presented in mm^2 and there’s no way to back calculate that to mm to compared it directly.

Ok, so yes, it is true that both studies showed about 13% growth with the highest volumes. Mind you, Ostrowski got DOUBLE the growth with only 3 sets that Brad needed 9 sets to get and even the 6 set/week group equalled his 27 set group. And while the third groups got roughly the same RF growth, we have to ask why it took 4 times as much volume in Brad’s study to accomplish this?

Seriously, in what world do you need 45 sets/week to achieve the same growth as another study got in 12 set/week? In discussing this discrepancy, Brad says this.

Interestingly, the group performing the lowest volume for the lower-body performed 9 sets in our study, which approaches the highest volume condition in Ostrowski et al. (11), yet much greater levels of volume were required to achieve similar hypertrophic responses in the quadriceps. The reason for these discrepancies remains unclear.

Basically a shoulder shrug and “dunno”. Not even a speculation as to why. Fantastic.

He actually tried to defend this on his website by writing the following:

“Some have asked why we did not discuss the dose-response implications between their study and ours. This was a matter of economy. Comparing and contrasting findings would have required fairly extensive discussion to properly cover nuances of the topic.

Moreover, for thoroughness we then would have had to delve into the other dose-response paper by Radaelli et al, further increasing word count. Our discussion section was already quite lengthy, and we felt it was better to err on the side of brevity. However, it’s certainly a fair point and I will aim to address those studies now.”

This is seriously sad. First off the journal doesn’t have a word limit on manuscripts so who cares for economy? Second off, it’s good scientific practice to examine the discrepancies between your work and previous research to actually try to forward the field by determining what the explanation might be. Third, with respect to Ostrowski, what nuance?

The study design was nearly identical, the weekly set count nearly identical, the training status nearly identical. There’s no nuance and the results are the results and the FACT is that Brad needed 4 times the volume to get them and should try to explain why.

Raedelli is an issue because that paper is a mess but so what. He was happy to address it in the STRENGTH data and examine the discrepancy writing this

However, for the bench press and lat- pulldown exercises, the 30 weekly set group experienced greater increases than the two other groups. Given that their subjects did not have any RT experience it might be that the greater strength gains in the 30 weekly set group are due to the greater opportunities to practice the exercise and thus an enhanced „learning‟ effect (22).

Also, their intervention lasted 6 months while the present study had a duration of 8 weeks. It might be that higher training volumes become of greater importance for strength gains over longer time courses; future studies exploring this topic using longer duration interventions are needed to confirm this hypothesis.

But what, by the time he got to the GROWTH data, he didn’t have the energy or time to discuss the differences? Please. But I guess when he’s putting out 5 papers per month, Brad is probably too time stretched to actually do something properly.

My Speculation

Anyhow, since Brad is too time crunched to do it, I will speculate on the possible reasons for the discrepancy in the growth data. I think a possibility, and this is impossible to know given that Ostrowski failed to report on their rest intervals, is that the absolutely sub-optimal rest interval in Schoenfeld’s study was the issue.

Basically, when you train on 90 seconds and can’t use heavy loads, maybe you need an absolute metric ton of junk volume to get the same growth as you could get doing 12 quality sets. This is MY speculation and nothing more but at least I provided one when he couldn’t.

Perhaps Brad should get me to help him with the discussion in the future since he clearly can’t do it himself. As noted above, I guess when you have to get your name on 45 papers per year (as of October 2018), you don’t have the time.

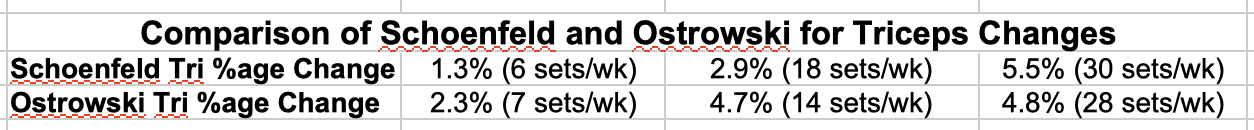

Triceps Hypertrophy

Now we come to the triceps data. First let me reiterate that Brad deliberately misrepresented the data set here, reporting that Ostrowski found that 28 sets gave better results than 7 sets (4.8% vs. 2.3%) which was broadly similar to his results.

This technically true but inaccurate and misleading since Ostrowski found a plateau at 14 sets, said data going unreported (arguably why he left out the middle data for the thigh changes, to establish the pattern). By leaving out this data point, Brad changed the results of Ostrowki from disagreeing with him to agreeing with him. This is called lying.

Honestly, that single fact should disallow this finding on every level and this paper should have never passed peer review for that alone (too bad I wasn’t on the peer review since I caught the Ostrowki lie upon my first read and I bet Brad wishes he hadn’t sent me the pre-publication paper at all).

Regardless of that, let me look at the side by side data in terms of %age gains.

So we see a similar pattern to legs. First, Ostrowski got almost double the %age growth with 7 sets/week that Brad got with 6. Moving up to 14 sets/week, Ostrowski still crushed Brad’s 18 set/week group. And ignoring the misrepresentation of the data, it took Brad 30 sets/week to achieve the a little more growth than Ostrowski got in 14.

So, just as with legs, it took over double the volume to achieve only a slight improvement. So just like with the leg data we ask why it seemed to take twice as much volume to generate the identical growth in Brad’s study (bonus question: why will nobody directly address Brad’s lie about this data set?).

Now, since Brad misrepresented the data to make it look like it supported him, there was no discussion of the discrepancy. It makes me wonder why more researchers don’t simply lie about data. It would save so much time discussing reasons why it disagrees with you. Just lie about the data and, boom, all research agrees with you. The amount of time it would save typing is enormous.

As with the leg data, I’d speculate again that the brutally suboptimal design of the workouts in Brad’s study are again at fault. Even for upper body how many can do a lot of RM load sets on a short rest interval? Not many.

But again maybe you need twice as much volume when those sets are lower quality junk volume. Simply put, Ostrowski got the same growth as Brad’s with 1/2 as many sets. Why do double for essentially the same results (doubling your volume for a small %age increase is asinine)?

The ECW Issue

There are other issues relating to this paper but I’ll only focus on the one that the Haun paper recently brought into light which is the issue of ECW and water retention which only appears to become an issue when more than 20 sets/week are done (note: Brad couldn’t have known about this paper since it came out a week before his so I can’t say he ignored it).

As a reminder, for the upper body workout, the set count was 6, 18 and 30. Only the last one hits the volume level of 20+ sets where ECW might be relevant. For legs it was 9, 27 and 45 so both the moderate and high volume group clear it.

As above, do we know that ECW impacts on Ultrasound? No. But we don’t know that it doesn’t (and there’s endless other work that edema is still present at the 48-72 hour time point Brad measured at anyhow even if James Krieger is desperate to dismiss it).

Haun clearly showed that it skewed the results hugely at high volumes and it needs to be studied and addressed. But the issue only applies to 3 of the groups in this study (30 set upper body, 27 and 45 set lower body). And if ECW turns out to skew the Ultrasound measurements, then even the trend towards better growth may very well disappear or be a measurement artifact.

Note I said may, not will and it may turn out not to have an effect (and I’ll accept that). It has to be studied so we can know for sure.

Rather, based on the detailed analysis of the statistics, it’s clear that the high volume group did in fact NOT do better than the moderate volume group although there was an apparent visible trend based on absolute mm change and %age change.

Not by P value and not by Bayesian factors (which were too small to be relevant because double jack shit is still jack shit, James) and not by any nonsensical argument by the people involved or those defending it.

Going forwards, I’m going to treat this study as if the moderate volume group did best because that is objectively what the statistics showed. I’ll look at the high volume group too but NOTHING about this study supports the conclusions being drawn. NOTHING.

You can agree or not. As I said above, Brad’s lie alone should dismiss the paper out of hand but I’m keeping it in so that I won’t be accused of bias or simply making data I don’t like disappear (you know, like Brad did with Ostrowski). So I’ll keep it in. But I will actually adhere to the statistical findings.

Because even if you take the highest volume results at face value, you’ll see next week that it contradicts the other 5 papers I will have looked at. And, as I discussed in Part 1, we base models on the overall data, not the one study (as James so helpfully pointed out despite the fact that only HIS group of folks were doing it). If 1 study is the outlier on the other 5, we ignore the 1 until it’s replicated. And in this case it must be replicated

BY ANOTHER LAB.

Brad can continue to churn out studies showing that volume is all that matters but I suspect that looking at all of them in detail would turn up an equal amount of shenanigans (a project for another day). As he’s now shown that he is willing to lie in a discussion to change the conclusion of a contradictory paper, nothing he puts out from here on out can be taken at face value: what’s to stop him from lying about data again?

If ONLY he can produce these results, then we have another issue (remember, not only is replication required but is better if someone else does it). If another lab, perhaps one that thinks he’s wrong replicates it, then we can consider it. Let’s get Jeremy Loenneke on the job, hahahaha (in-joke, sorry).

You’ll also see in Part 3 that if we take into account another issue that has only recently been brought up (by Brad himself), that even if we take the highest set counts at face value as generating higher growth, it still stops mattering. You’ll have to wait until next week for that.

Summary

Despite their attempts to make volume happen, Brad’s own statistics at best support that moderate volumes of 18 sets for upper body and 27 for lower body give better growth than lower volumes with statistically irrelevant support for the highest volumes of 30 and 45 sets being any better (there was a trend that did not reach significance by any of the methods used).

Even if you accept the highest volume data, it doesn’t change the fact that Brad needed 2X and 4X the volume to get the SAME growth as Ostrowski with him attempting to defend why he did NOT explain the discrepancy. And that was with a lie about the journal having a word limit for the manuscript which is objectively untrue.

Since the leg volumes vary from a low of 9 sets to a medium 27 it’s impossible to know if a value between that level would have generated different results. But it’s a huge spread of volume and an 18 set group would be very informative. But they didn’t do it, the data is the data and we don’t know what might have happened (any speculation I could make it colored by my own bias so I won’t make one).

Also, the lack of differences in strength gains between groups goes against literally every other study on the topic where more volume, up to a point, leads to greater strength gains. Literally every study.

Brad speculates on the reason but it still contradicts a lot of other data. But the most parsimonious explanation, given the general relationship of muscle size and strength, would simply be that the gains in muscle didn’t differ. Occam’s Razor folks, now available from Dollar Shave Company. Same muscular gains equal same strength gains. QED.

This study just fails the reality check so hard it hurts. The workouts can’t possibly have been completed to begin with using any decent poundages, the lack of strength gains scaling with supposed size gains contradict all previous data, etc. It goes against every other study. So either they are all wrong or it is wrong and well…..

Couple that with the deliberate misrepresentation of the data of Ostrowski and this study has some real issues. It failed half of the criteria Greg Knuckols himself laid out in MASS methodologically even if Eric still said “Good study, broseph” (and Greg tried to somehow dismiss what I wrote last time).

But, I’m nice, I won’t dismiss it out of hand like I did with Radaelli because then people will say I’m biased against Brad and I’ll include it in the rest of my analysis. Even IF it’s results are valid, and I clearly don’t think they are, it won’t end up mattering.

Dose-Response of Weekly Resistance Training Volume and Frequency on Muscular Adaptations in Trained Males

And on the heels of all of that is a brand new paper that came out a week after Brad’s by Heaselgrave et. al and published in the Int J Sports Physiology and Performance. In it, 49 resistance trained males (1 year or more consistent training so not untrained) were put in either a low, moderate or high volume group that did 9, 18 or 27 sets for biceps for 6 weeks.

This was accomplished with a workout of biceps curls, bent over row and pulldown and the workout structure is a little bit goofy, unfortunately having both a frequency and volume component which adds an unnecessary second independent variable.

Training Volume

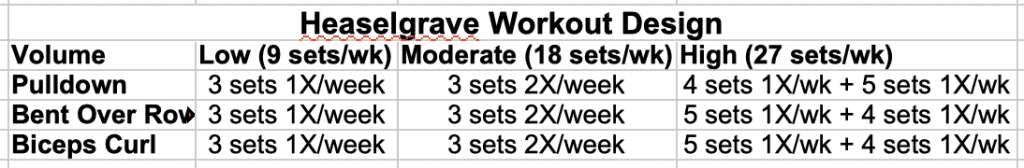

The low volume group trained once weekly performing 3 sets of each exercise for a total of 9 sets. The moderate group performed that workout twice per week for a total of 18 sets. The high volume group did one workout consisting of 5 sets of curls and row and 4 sets of pulldowns (14 sets) and a second workout of 4 sets of curls and row and 5 sets of pulldowns (13 sets) for a total of 27 sets. I’ve replicated it to the best of my ability below with the + in the high group referring to the second workout.

They did sets of 10-12 with a goal RIR of 2 reps (so close to failure), starting at 75% of 1RM and a 3 minute rest between sets. The workouts were overseen with a lifting tempo of one second up and 3 seconds down.

Basically they lifted heavy weights, near limits and used a long enough rest interval to keep the loads heavy and as they state “…to maximize MPS [muscle protein synthesis] and strength gains.”

The Big Methodological Issue

The subjects were allowed to work out outside of the study which is a HUGE methodological problem but they provided workout logs in order to show that they were not doing extra biceps work. This unfortunately makes it easy to hand-wave these results away which is more or less what Brad tried to do online. How do we KNOW that they didn’t do more arm work?

Well we don’t so we kind of have to trust them and their self-reporting. And since Brad said he can do studies unblinded because you can trust him, I think it’s only fair to apply the same standard here unless he wants to call the study subjects liars.

I for one would hope Brad would not impugn someone’s integrity in such a fashion unless he is the only human in the world who can be trusted. Shouldn’t we give the subjects of this study the benefit of the doubt?

Of course, I could claim on any study I didn’t like, that the subjects did extra training outside of the study (call it The Colorado Experiment effect). In Brad’s study in Haun’s study, nobody can say for sure if the subjects did more training outside of the study itself. Unless you lock them up in a metabolic ward, you can never know for sure.

But applying this standard to a study that contradicts him is sure convenient, isn’t it?

Is this design ideal? No. It simply is what it is and I’m describing this limitation up front because that’s the honest thing to do and I think the results are still worth examining. And if I’m keeping in Brad’s methodological shit show of a study (remember, failed about HALF of Knuckols’ methodolgy list) and Eric says it’s ok if one study is unsound because others are, I’m keeping this too.

They opened the door, I get to use it.

Muscle Thickness Changes

Looking at muscular thickness, the average changes in size were 0.1 cm (1mm) for the low volume group, 0.3 cm (3mm) for the moderate volume group and 0.2 cm (2mm) for the high volume group.

Now all three groups were significantly different from the initial value. However, as with Ostrowski, there was no difference between groups. That is, statistically the researcher concludes that all groups grew the same.

At the same time, as with Ostrowski there is a visible trend (admittedly with LARGE variance) from low (1 mm) to moderate (3 mm) back to high (2 mm). Unfortunately the researchers did not provide the starting or ending levels, only the change so I can’t calculate the percentage changes here. And I am hesitant to eyeball it as it will reflect my own bias.

At the same time, statistically insignificant or not, the absolute values are similar in absolute terms to the Ostrowski triceps data (1mm, 3mm, 2mm here vs. 1 mm, 2mm, 2mm in Ostrowski). If Brad Schoenfeld at. al. get to use that magnitude of non-significant changes (albeit misrepresented) I feel as I have the same right.

They opened the door, I get to walk through it. An alternate conclusion, and this would be consistent with other work would be that all groups did in fact do the same and that 9 sets was just as effective as 18 or 27. You can take your pick on which you think is correct.

What kind of stands out is the individual variance (and this is always an issue with these studies, hi James). Clearly some subjects in the low volume group grew better than others in the moderate volume group (which has both the highest AND lowest responder).

The high volume group is more well clustered together certainly. But if other papers are going to look at average response, I’m using this one’s average response for consistency. And on average, moderate volumes beat out low and high volume was either equal to or even a bit less than moderate.

Summary

While the researchers reported no difference in growth from low to moderate to higher volumes there is a trend towards better growth from 9 to 18 sets with no further growth at 27 sets. Again we see a cap/threshold at moderate volumes.

And with that final study out of the way, I’ll cut it here. Next week in the third and final part, I’ll look at the studies in the aggregate to see if any general patterns show up along with re-addressing the set counting issue. Don’t worry, this is almost over.

Read Training Volume and Hypertrophy: Part 3.

Facebook Comments